What 10,000 Unreviewed Sales Calls Taught Us About Lost Deals

At CallOptix, we've processed over 10,000 B2B sales calls through our AI analysis platform. When teams go from spot-checking a handful of recordings to analyzing all of them, the same four patterns show up with almost eerie consistency.

Your sales team made 200 calls last week. Your manager listened to maybe 5.

That's 195 conversations nobody reviewed. Nobody checked whether the prospect went quiet at minute six. Nobody noticed three different reps stumbling over the same objection. Nobody caught the moment a warm lead said "loop in my CTO next Tuesday" and then watched that commitment vanish from the CRM.

At CallOptix, we've processed over 10,000 B2B sales calls through our AI analysis platform. When teams go from spot-checking a handful of recordings to analyzing all of them, the same four patterns show up with almost eerie consistency. Doesn't matter if it's a 5-rep startup or a 50-person mid-market team.

Here's what keeps hiding in the calls nobody listens to.

What CallOptix Found Hiding in Unreviewed Sales Call Data

1. The top objection is almost never pricing.

Ask sales managers what their team hears most and the majority will say some version of "it's too expensive." CallOptix call data tells a different story.

Across the teams we've analyzed, the most frequent objection category is timing and internal prioritization. Prospects aren't saying "it costs too much." They're saying things like "we've got other fires right now" or "I'd need buy-in from two other teams before this moves."

That distinction changes everything about how you coach. If you're drilling pricing rebuttals when the real blocker is internal politics, you're spending training time on the wrong skill. And you won't catch this in a 5-call sample because the calls your manager picks aren't random. They're usually the long ones, the problem ones, or the ones from the rep who's struggling. That's a biased sample before you even hit play.

What better looks like: Teams that catch this early typically run objection audits monthly across their full call volume, not just the calls that felt off. When you see the real distribution, you can rebuild your battle cards around what prospects actually say instead of what managers assume they say.

2. Every team has a "dead zone" where prospect engagement drops off.

There's a specific point in most sales conversations where the energy shifts. Responses get shorter. Questions dry up. You can almost hear the prospect checking out.

We measure this at CallOptix through real-time sentiment tracking and talk pattern analysis. The trigger is different for every org.

For some teams, the dead zone hits right when the rep pivots from discovery to pitching. For others, it's the pricing conversation. But here's the one that surprised us: for a lot of teams, engagement drops when a rep launches into a demo without connecting it to something the prospect said they cared about. The prospect asked about onboarding speed, and the rep showed them the analytics dashboard. That disconnect kills momentum faster than a bad price.

You can't fix your dead zone if you can't see it. And you won't see it by listening to 5 calls a week.

What better looks like: Once you know where your team's dead zone lives, the fix is usually straightforward. A 15-minute role-play focused on that specific transition. A talk track adjustment. A rule like "never demo a feature you haven't tied to something the prospect said in the last 3 minutes." Simple changes, but only if you have the data to know where to apply them.

.webp)

3. Your best closer's playbook is invisible to everyone else.

This one frustrates me the most, honestly.

Your top performer does something different. In CallOptix's data, the gap is measurable:

- Talk-to-listen ratio: Top closers sit around 35:65. Average performers run 55:45 or worse.

- Discovery depth: Top reps ask roughly 3x more questions in the first five minutes before mentioning the product at all.

- Stall handling: When a prospect says "just send me an email," top reps redirect with a specific question. Average reps say "sure, I'll send that over" and lose the conversation.

But without systematic call analysis, nobody extracts these patterns. The playbook lives inside one person's recordings. Nobody turns it into coaching material. And when that rep takes another offer (which top performers tend to do), the playbook walks out the door with them.

What better looks like: CallOptix scores every call against performance benchmarks and surfaces the specific behaviors that separate closers from the rest of the team. We've seen managers build entire onboarding programs from patterns they didn't know existed until they looked at the data. Even just sharing 3 call clips from a top rep in a team meeting can shift how junior reps approach their next conversation.

4. Micro-commitments are disappearing from your pipeline.

"I'll send that case study by Thursday." "Let's get your VP on a call next week." "I'll follow up with enterprise pricing."

These small promises, from reps and prospects both, are the connective tissue of a live deal.

In CallOptix's data, roughly 40% of specific follow-up commitments made on sales calls are never fulfilled.

Reps forget. Prospects ghost. And deals that looked healthy on Monday are cold by Friday because neither side did what they said they would.

Your CRM can't catch this because CRM fields don't record what was actually said on a call. They record what the rep remembered to type afterward, which is a very different thing.

What better looks like: CallOptix flags every commitment made during a conversation and tracks whether it shows up in the follow-up activity. But even without tooling, you can start here: after every call, have reps log the exact commitment they made and the exact date it's due. Not "follow up next week." The specific thing, the specific day. Accountability goes up the moment you write it down.

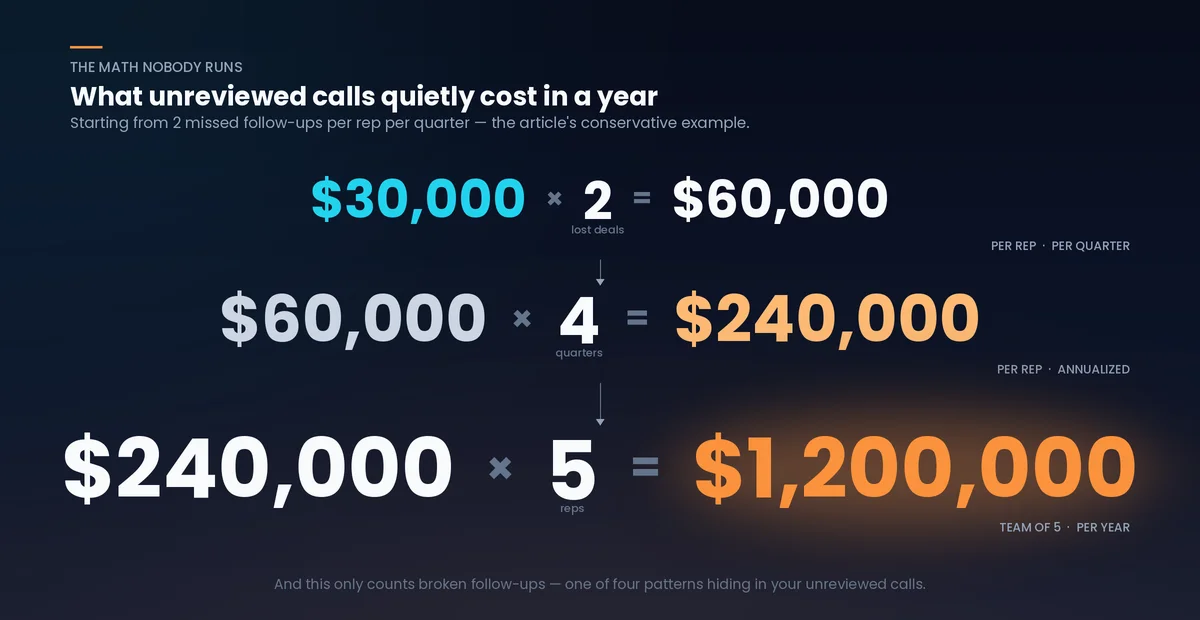

Quick Math: What This Is Actually Costing You

Here's an exercise that takes 30 seconds and will probably ruin your afternoon.

Take your average deal size. Multiply it by the number of deals your team lost last quarter that made it past the first call but didn't close. Now ask yourself: how many of those losses came from one of the four patterns above?

You won't know the exact number. That's the point.

If your average deal is $30,000 and broken commitments alone killed just 2 deals last quarter, that's $60,000 in revenue you'll never trace back to a missed Thursday email. If misidentified objections sent your coaching in the wrong direction for a full quarter, the compounding cost across your team is significantly higher.

These aren't dramatic estimates. They're what teams consistently uncover once they start looking at their call data with any real rigor. The problem is invisible until you measure it.

Do the math for your own team. Count last week's calls. Divide by how many your manager actually reviewed. Sit with that number for a second.

Why Spot-Checking Calls Doesn't Cut It

None of this is about blaming managers. Most sales leaders genuinely want to coach better. They listen when they can. They pull recordings when a deal falls apart. They sit in on live calls when the calendar allows it.

The problem is math, not motivation.

If your team runs 200 calls a week and a manager reviews 5 to 10, that's a 2.5% to 5% sample. You wouldn't make product decisions from 5% of user feedback. You wouldn't ship a feature based on 5 support tickets. But that's exactly how most teams make decisions about their sales process, every single week.

Five calls give you stories. Five hundred calls give you patterns. And patterns are what actually move the number.

A fair caveat: even analyzing every call won't help if nobody acts on what the data shows. We've seen teams pull incredible insights from CallOptix and then do nothing with them because the coaching workflow wasn't there. Data without a feedback loop is just noise. The analysis is the starting point, not the finish line.

The Question Worth Asking

Every SaaS sales team that starts analyzing calls at scale finds at least two of the four patterns above in the first week. We haven't seen an exception yet.

So the question isn't whether these problems exist in your pipeline. They almost certainly do.

The question is which one is doing the most damage right now.

Is it the misidentified objections? The dead zone? The invisible playbook? Or the broken commitments quietly bleeding out your deals?

The only way to answer that is to actually look at the calls.

This is why we built CallOptix. If you want to see what your unreviewed calls are hiding, start with a free call analysis here.

Frequently Asked Questions

What percentage of sales calls do managers typically review?

Most B2B sales teams review between 2% and 5% of their total call volume manually. CallOptix's data across SaaS organizations shows that a team making 200 calls per week typically has a manager listening to 5 to 10 recordings. The other 95% of conversations, and the objection patterns, engagement signals, and coaching opportunities inside them, go completely unexamined.

What are the most common hidden patterns in B2B sales calls?

According to CallOptix's analysis of over 10,000 B2B sales calls, four patterns show up consistently when teams start reviewing calls at scale: the real top objection is usually timing or internal prioritization (not pricing), every team has a measurable "dead zone" where prospect engagement drops, top performers use distinct techniques that aren't being replicated across the team, and roughly 40% of follow-up commitments made on calls are never fulfilled.

What is the real cost of not analyzing sales calls?

The cost depends on your deal size and loss rate, but the math compounds quickly. CallOptix data shows that broken follow-up commitments alone account for a significant share of mid-pipeline deal deaths. For a team with a $30,000 average deal size, even 2 lost deals per quarter from unfulfilled commitments represents $60,000 in untracked revenue loss. Factor in misidentified objections and unreplicated top-performer techniques, and the number grows considerably.

What is a "dead zone" in a sales call?

A dead zone is the point in a sales conversation where prospect engagement measurably drops. CallOptix identifies dead zones through sentiment analysis and talk pattern tracking. The most common triggers include reps jumping to a product pitch before finishing discovery, demonstrating features that don't connect to the prospect's stated problem, and extended monologues of three or more minutes without asking a question. The dead zone location is different for every sales team, which is why it requires data to diagnose.

How many sales calls do you need to analyze before patterns become visible?

From CallOptix's experience, useful signals start appearing in as few as 15 to 20 calls, but reliable, coachable patterns typically need 50 or more calls over a 2 to 4 week window. Five calls give you anecdotes. Fifty give you data you can actually build a coaching plan around.

Does call analysis work for teams selling across multiple markets and languages?

Yes. CallOptix supports AI-powered call analysis in over 100 languages, which makes it one of the few platforms built for global and multilingual sales operations. This includes transcription, sentiment analysis, objection tracking, and agent scoring across languages. For SaaS companies with international teams or reps who switch between languages on the same call (Hinglish, Spanglish, or any code-switching pattern), this matters more than most teams realize until they try analyzing a bilingual recording with a single-language tool.

Related Articles

Get the Latest Insights

Get the latest insights on call center optimization and AI-powered sales strategies delivered to your inbox.

By subscribing you agree to receive marketing emails. Unsubscribe anytime.